Thoughts on AI

I don't like where the tech industry (and, really, the world at large) is going with LLM's (a.k.a. "AI").

There. I said it. *phew!*

For all of the two people who might read the things I occasionally post here, that probably isn't a big surprise if they had noticed the footer text found on every page on this site. Also, my use of quotes around "AI" is another pretty big tell I suppose.

I feel compelled to write some words on this topic here (even though I'd really rather not) because this technology is being thoughtlessly jammed into every single part of our lives lately. I increasingly feel like keeping my thoughts about this to myself is not helping my sanity.

This is not going to be some amazingly well-researched piece with tons of data points to back up all of my thoughts. I don't really view that as a problem anyway since the vast majority of the people who are jamming this stuff into our lives daily don't back up any of their claims with actual data either.

What we were promised, and what we got instead

There's a bit of a meme that you can find repeated in various forms since all of this LLM-hype began that goes something like:

We were promised AI that would do our laundry and wash our dishes letting us spend more time on our creative art projects, but instead we got AI that does our creative art projects while we still have to do our laundry and wash our dishes.

It's perhaps a little bit over the top, as LLMs are certainly useful in some more mundane or "grunt work"-type tasks.

Now to be fair, I don't know if we were ever really "promised" anything AI-related ever. But as a kid growing up in the 90's watching science-fiction like Star Trek, it certainly did seem like AI was going to be some crazy useful thing that could do all kinds of extremely useful things for us in the future. Helping us solve hard problems, assisting us with research, saving us from making potentially fatal mistakes, and more.

Over the past couple years, we now have AI tools that can generate artwork, videos, creative writing (stories), and even code!

While I can perhaps maybe see some of the practical applications of generating some artwork and videos through the use of AI (even if I may disagree with it personally) just as a means to an end and not at all as a "creative" endeavour (maybe you need some quick placeholder stuff, for example), I really struggle to understand why an actual artist or author would want to use AI to assist with creative art or writing projects. Any of the possible explanations I come up with lead me back to the same conclusion: someone doing something that they would really rather not be doing. So in the case of an author using AI to assist in their work on a story they are writing ... well, I start wondering if they even really wanted to write a story in the first place. I dunno, it doesn't make sense to me.

Coding is included in this for me as I do view coding as a creative endeavour myself. Sure, it's not always the case. Sometimes code can be quite monotonous. But I think there are a great many projects where the approach taking to coding a solution is genuinely a creative work. I definitely am one of the people who view coding as more art than science, though there are certainly aspects of both involved.

And so yes, I do mourn the loss of this creative work in an industry that seems increasingly gung-ho about not ever writing another line of code by hand again.

Your job isn't to write code anymore, it's to direct and review

This is an often repeated claim in software engineering circles nowadays, and this to me is where the previously mentioned meme (or at least one variation of it) comes into play.

The idea behind this claim is that software engineering is now AI-assisted where "agents" are now writing code for you, given direction from prompts and a variety of Markdown files that live in your project to help direct things and provide adequate context to keep it from going too far off the rails. You're not writing code by hand anymore, you're prompting the agent(s) to do the tasks you need and then you're reviewing their output and either accepting it or asking it to make further revisions if you spot any mistakes.

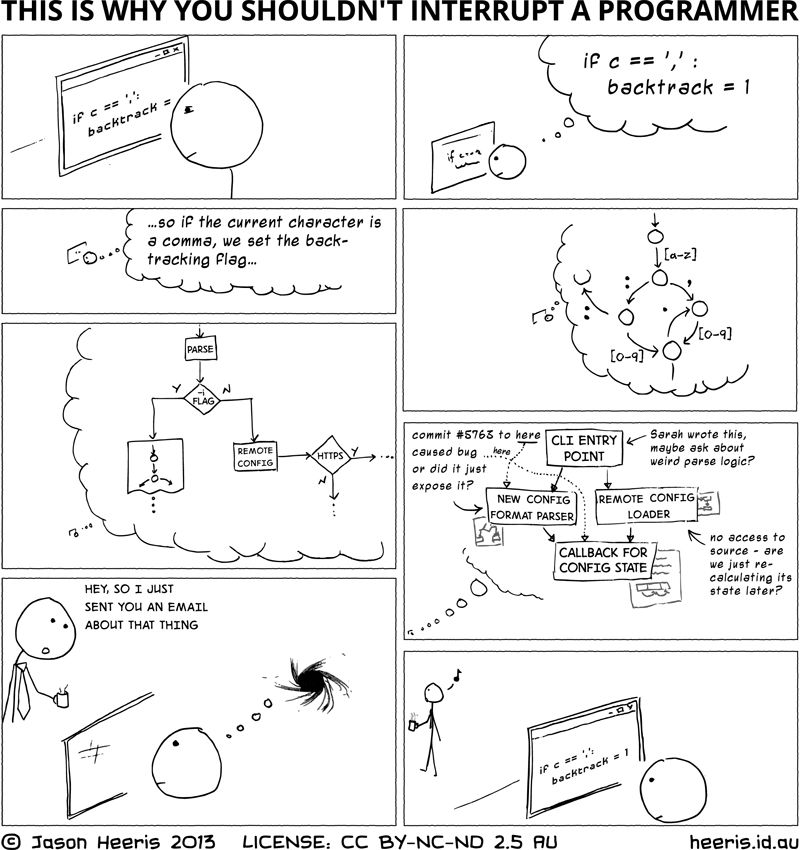

As anyone with any sliver of experience in software development will tell you, reading and understanding code that you did not write is often the hardest part of the job. Reviewing is not easy. When you're reading code, you are not just reading code, you're building up an entire mental model of what is happening in that code. This includes a model of the data that is being represented and manipulated in some way. Code almost always interacts with other pieces of code, and other pieces of data, and these interactions branch off and form a complex spiderweb of interconnected logic and processes.

Reading your own code can sometimes be easier. Granted, if you wrote it months ago and haven't seen it since, it can be difficult too. There are many funny memes about this nowadays. But at the very least, you might have a slight advantage because you just have to get back into your own head, your own thought process. Reading someone else's code requires you to get into someone else's thought process. That can be a challenge! We don't all think through problems in the same way. We don't all perceive things in the same way.

AI-generated code is obviously not your own code (you didn't write it, you prompted for it). And it wasn't generated by a thinking intelligence, it was generated by a very complex unthinking probabilistic token generating machine. Have fun getting into the "thought process" of that!

I've always liked this old comic that touches on how complex some of the mental models that you can be working with as a software developer get. And also how easily they can vanish too.

The point here is that it takes time to read and understand code. It takes a lot of time to read and understand lots of code. One of the tell-tale signs of AI-generated code (in my experience) is verbose code that doesn't always make use of your existing abstractions and even creates new (semi-)redundant ones. In other words, AI tools are often very happy to generate lots and lots of potentially unnecessary code for you. You have to work very hard to keep it on a tight leash, requiring very high attention to detail during review to distinguish between good AI-generated code and AI-generated slop. This takes time to do properly.

The thing with code is that more is usually not better. Decades ago lines of code was used as one of the metrics by which to measure software developer productivity. For many years we have all been able to collectively breathe sighs of relief, secure in the knowledge that we had moved beyond that extremely flawed way of thinking. Speaking very honestly, I sometimes feel like my most productive days are where I removed large amounts of code and replaced them with better, simpler algorithms or abstractions. I know many developers who would echo similar feelings.

But less code is not necessarily simpler to read and understand either. It can be. But sometimes, for example, a very tight algorithm can be tricky to understand in full. Sometimes I also think slightly more verbose ways of writing code can be easier to read "at a glance." Basically, it's not always black-and-white. Most often it's a whole bunch of shades of grey.

Telling developers that "you just need to review now" is wild to me. Reviewing has always been a big part of the job, but the way this is presented nowadays always feels like someone is saying this in a "it's no big deal" kind of way. Nothing could be further from the truth. The reality is that it happens to be one of the most mentally draining and difficult parts of the job and simultaneously is an incredibly important part of the job. No developers that I know love reviewing code. Everyone grumbles about it, to varying degrees. Everyone procrastinates on their pull request review duties. This is also one of the reasons a lot of developers half-ass it. People are lazy, especially when it comes to things they don't like doing.

The idea that you're removing what many developers view as one of the most fun parts of the job (writing code, especially greenfield development) and largely replacing it with the least fun part of the job just does not bode well to me with all of the wild claims of productivity increases on one hand and the need to keep any sense of software quality on the other.

How productive are you really going to be long-term if your day-to-day is full of something you don't like doing all that much? And this is before we even get to talking about the quality of your typical code review ...

Many developers don't even review code

I know that I personally have plenty of stories of authoring a pull request with several hundred (if not more) lines of code changed and then tagging other members on my team asking for a review only to receive a notification that it was approved just a few minutes later. There is no way in this universe that someone read and fully understood my big change in just a few minutes!

This is such a common meme in this industry ("LGTM! :+1:") because it is so incredibly relatable. Most developers I know also have stories about this.

I've long held the belief that people don't really read anymore. Not just in tech, but everywhere. People just skim. I don't have any data to back that up, only anecdotes. But I find it interesting that every time I share that with anyone else, they always nod in full agreement. Everyone always has stories to share in support of that. And it also feels like this is just getting worse over time.

So, when you see some data from surveys just recently which show simultaneously that:

- 96% of developers don't fully trust that AI-generated code is functionally correct, and

- Only 48% of developers always check their AI-assisted code before committing

You really start to wonder what the actual fuck is going to happen in the future with increasingly prevalent AI-assisted coding. Only half of the developers who don't fully trust AI-generated code are always reviewing AI-assisted code? Are you fucking kidding me right now?

If many developers are so bad at reviewing code, with many just not doing it at all (perhaps at best, just skimming code changes during pull request reviews), what does that ultimately mean for software quality going forward?

I feel like that is perhaps an increasingly rhetorical question given that we seem to be seeing an only increasing number of cases of software bugs and downtime from tech companies loudly and proudly claiming that large portions (if not all) of their product's code is written with AI-assistance.

Writing code helps your understanding, and with reviewing

I think there's a lot of people out there who don't write code at all who perhaps think that the act of writing code is not unlike any other "low skill" manual labour type of work. Sure, you have to learn the language and some other software tools and details to be able to write code, but you're just building something from a specification, right? How hard can that really be? Why don't we just skip all that grunt work?

The problem is that writing code is both similar to and different from something like constructing a building, or other types of labour that people try to compare coding to.

On the one hand, yes, the act of writing code is essentially building something digitally.

But on the other hand, I think it is important to understand that writing code in a complex project also helps improve your understanding of the problem domain. Because unlike with the construction of a building or a bridge, a software project is very likely much more than just a structure. It's a complex process that is performing complex logic. And it might even be interacting with other external complex processes. It is taking inputs, executing some series of transformations, and producing some desired outputs. The logic behind those "transformations" has to be carefully planned and architected. The inputs in many cases may have to be massaged or otherwise prepared. The outputs may be expected to vary in many wonderful ways.

One of the main problems with the Waterfall method of running a software development project was that the idea that you could reliably do full upfront requirements gathering and project specification to the point that the developers were essentially "painting by numbers" to build the project was, most of the time, a fantasy. Most developers know that requirements change over time. The stakeholders of a project often don't even fully understand what they even need in the first place. Our understanding of the problem changes during the course of the project. As we write the code to do stuff and as we test builds over the course of the project, we start understanding the nuances of the problem better and we spot gaps in the specifications. We have to constantly be refining things. The nitty-gritty details end up being extremely important.

The act of writing code in a complex software project also helps a developer further build up and reinforce their own mental model of the project and how the code and data interact. This helps with further development of the project but it also helps with code review. A developer with a stronger familiarity of a codebase and with a stronger mental model of a project will be far more effective at review of code changes in that same project than another developer who lacks those things.

Additionally, this helps tremendously with debugging code! A developer with a strong mental model of a project will be far better at debugging code in that project than a developer who does not.

I'm not saying that you cannot do these things without a strong mental model of a project. But you won't be nearly as effective at it.

My view here is that as a developer, if you're not actively participating in actually writing some of the code yourself in a project that you're working on, you are not operating at full effectiveness within the context of that project.

The allure of "low code"

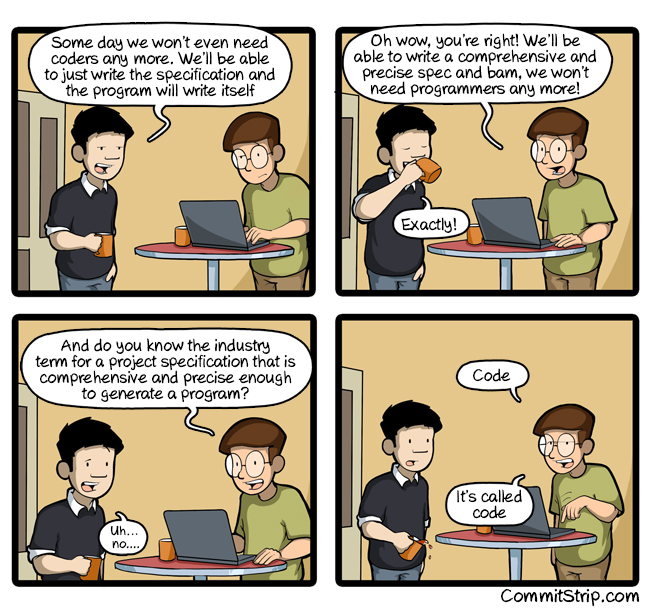

There are many cycles in this industry that repeat over the years and one of them is the "low code" idea. This is the idea of replacing the act of writing code with something higher level that doesn't require actual coding. Instead allowing you to create a working program visually or maybe just by writing plain English requirements or specifications.

This idea is many decades old by now. Spoiler alert for anyone who is not familiar with this particular hype cycle: It never pans out.

The way that I've personally seen this unfold in practice is that a lot of money and time are spent on building a solution with these tools, but the end result is always a massively more unmaintainable mess than if the project had initially been approached with a more traditional software engineering method instead. The solution that is built ages like milk and then even worse, it becomes hugely expensive to maintain over time and then to eventually migrate to something else later, because eventually the vendor of whatever "low code" tool you used went under or otherwise stopped supporting the product.

Just like with LLMs in this current hype cycle, these technologies typically demo really, really, really well. LLMs demo amazingly well. They very easily attract people and many potential use-cases are readily apparent to the average person. It's usually very hard to explain to the people that are attracted to them why they might be a bad idea for some of these use-cases over the long term.

The cost of offloading your thinking

I think this can be very simply summed up with "if you don't use it, you will lose it."

This is of course referring to how skills atrophy over time when you don't use them and I think that almost everyone can relate to this. How often have you encountered a situation where you were suddenly tasked with doing something that you remembered doing well many years ago (e.g., playing a musical instrument), but now when you tried to pick it up again suddenly realized you were very out of practice and could barely do it at all. I think almost all of us have been in that situation before.

Coding is no different. If you haven't written code in a long time and then are suddenly tasked with doing it again, you will not be as effective as you once were. This is a very simple truth.

When it comes to AI-assisted coding however, you don't have to take my word for it. You can get it straight from the horse's mouth: Anthropic did a study very recently about how AI assistance affects coding skills. The findings indicate that there are measurable differences between the use of AI assistance or not for coding. The study participants who used AI assistance showed a measurable and statistically significant decrease in their understanding of code overall.

The most fascinating thing to me is that this finding feels like it should be common sense.

If you think about it logically, I think most people would agree that it makes sense. If you skip over something (you take a shortcut), then you won't necessarily learn or retain understanding of how that something works, because you didn't actually do it.

I would also add that thinking through problems itself is a skill like any other. If you're offloading your thinking to an AI, you're probably going to be measurably worse at that too, given enough time!

Quality

An important question that arises (or, at least, should arise) with the use of AI is how good/accurate/correct are the results.

Ultimately, the answer is that "it varies."

Honestly, that is really my experience. And I think that is also the majority of people's experiences, if they are being honest about it. A lot of this really does point to the fact that, while we call it "AI" which of course stands for "Artificial Intelligence", it really doesn't seem all that intelligent the more you use it and the more mistakes you catch it making. I've heard some funny alternate meanings for "AI" which are usually very apt, such as "Almost Intelligent."

The underlying technology, LLMs, or "Large Language Models" is really just a super complicated token prediction engine. The "AI" doesn't understand things in the same way as you or I do (and I'm going to refrain from getting into a philosophical discussion about what is true intelligence or understanding since I'm hardly qualified ... I don't really believe that such a discussion is necessary anyway if you just observe some of the things that LLMs will spit out). All that an LLM really "understands" is the statistical relationship between tokens.

And so, I think it's important to remember that when you use an AI to write code for you, the AI is not really "thinking" about the code and coming up with a novel solution, or really doing anything related to "problem solving" in the way that a human would. It is writing the most statistically likely code to fit the given prompt and context. And of course, this is how you end up with things like hallucinated API calls that don't exist, etc. Now lets be fair here and acknowledge that with modern AI coding techniques, in addition to using models that are much better trained now, also involve much more sophisticated ways of managing context provided in any given prompt that reduce the probability of these types of hallucinations from happening.

One fun aspect of LLMs is that unlike a person, LLMs don't learn from their mistakes. If you have to correct it during a session with an LLM, it won't remember that during the next session. So you have to be constantly vigilant. And you're simply not going to be able to account for all of these mistakes in the myriad of Markdown files that you're collecting in your repository for your agent(s) to use. Eventually I'm sure you'd rather be able to use all that context space to hold the actual code in your project after all.

So aside from all that, how good are the results of AI code generation?

I can talk a bit about some very recent high-profile projects and demonstrations to help give some ideas of this.

1. Cursor's AI-built Web Browser

Using ChatGPT 5.2, Cursor assembled a team of agents and tasked them to build a web browser from scratch.

It didn't go that well (this article is notably extremely negative, but it does summarize the timeline of events decently enough).

In the end it was over a million lines of code and barely worked at all. In fact, I remember looking at the Github repository the same day this was first shared by Cursor and noticing the same thing that that other article I linked mentions: that the Github CI was absolutely full of failed builds. I also remember looking through the code and not coming away with the impression that it was well architected at all. It really seemed to be a giant mess. Certainly there was a lot of other people at the time who agreed with that take.

Overall this project is not at all impressive to me from a quality point of view. It honestly feels like garbage. Over a million lines of code and a barely working result isn't something you're going to fix quickly or easily. If you decide to throw human developers at it, it's going to take them a very long time to untangle the messy code. Probably you're better off starting from scratch at that point. If you continue with an AI approach, I suspect this project shows that you end up hitting a "not quite there yet" ceiling and never being able to move past that point.

2. Anthropic's AI-built C Compiler

Using Opus 4.6, Anthropic also assembled a team of agents and tasked them to build a C compiler. They very weirdly claimed that this was a "clean room" implementation despite the fact that Opus is almost certainly trained on code that includes open source C compilers such as GCC. But we'll leave that aside.

This project went better than Cursor's project, but doesn't seem to be without flaws. Notably, its built in assembler and linker are limited and buggy. It also is very poor at optimizing the compiler generated code. Lets not make the mistake of pretending that either of these things would be anything close to a "quick fix."

The big advantage of this project was that they utilized GCC's test suite, which I think at least does clearly demonstrate that if you're dealing with probabilistic unthinking machines, then having something to give them a crystal-clear "success" or "failure" grade at the end of any given task they complete is incredibly important.

Given that the resulting project is about 180,000 lines of code, I don't think that this is a project that you'd continue with human developers, as that is still an awful lot of code that humans didn't write to dive into and become familiar with. If you were going to continue developing this at all, you'd probably end up continuing with an AI approach I would imagine. And I'm not sure I believe that AI is up to the challenge here, because I strongly suspect that if it was, Anthropic would have already done it. There seems to be no reason for them to have stopped short here.

I'm being a bit harder on them on this project because a C compiler is a complicated project, but not a novel one. And they were using GCC's test suite which is a huge advantage for a project of this size. And again, Opus has almost certainly been trained on GCC and LLVM, as well as other open source compilers. One would think it would be able to regurgitate a lot of this from its training data.

3. Anthropic's Claude Code

I think the Claude Code CLI tool is interesting to look at even just from what we can very quickly see publicly. From what Anthropic says themselves, AI is apparently writing 90% of their own code. It is also interesting to see that their own engineers admit that being fully hands-off with a vibe-coding approach is not a good idea for production code. So, we can assume that they aren't entirely vibe-coding this, but that they are letting AI write the vast majority of the code.

The things that catch my attention with this project and why I wanted to include it here:

- I keep reading people say that Claude Code itself feels buggy (even at the same time as you read many of these same people gushing over it).

- The Github repository has 7,783 open issues and 30,815 closed issues at the time of me writing this. The last time I looked a few weeks prior there was about 5,000 open issues and 24,000 closed issues.

That's a lot of issues!

Now let's pivot slightly for just a moment and look at some of the things that Spotify is claiming that its developers are doing:

"As a concrete example, an engineer at Spotify on their morning commute from Slack on their cell phone can tell Claude to fix a bug or add a new feature to the iOS app," Söderström said. "And once Claude finishes that work, the engineer then gets a new version of the app, pushed to them on Slack on their phone, so that he can then merge it to production, all before they even arrive at the office."

So Spotify developers are allegedly using Anthropic's Claude to fix bugs or add features very easily where developer's only need to direct the AI from their phone on their commute to work. Lets just ignore how dystopian it sounds that their developers apparently feel obligated to work during their morning commute.

Anyway, returning to Anthropic Claude Code and the number of open issues. One would imagine that if there is any validity to Spotify's claim at all and the quality of the results they see from that approach, that Anthropic, of all the companies in the world, should be able to apply this very same methodology to Claude Code itself and fix all 7,000 of those issues in short order, no? Not overnight certainly, but they should be able to make headway on this at the very least and I shouldn't be observing an increasing number of open issues each time I look.

I don't think that's an unreasonable take, because I keep reading people over and over claiming that Claude Code is really good at fixing bugs. So ... is it actually? If anyone could make this happen, surely Anthropic would be the one to do it, no? Just think of how much of an insanely effective advertisement that would be for Claude. And yet, the issue count seems to only be growing. They don't seem to be making any headway on it.

I suspect that tells us something.

EDIT 2026-Apr-03: Not even two days after I wrote this post, the source code for Claude Code CLI was accidentally leaked by Anthropic themselves. Oops. So we can now see that, indeed, the code does look exactly like they are using AI to write 90% of their code. I'm not at all surprised by the large and ever increasing number of open Github issues after seeing this.

4. OpenClaw

I have more to say about OpenClaw later, but for now in our discussion about the quality of AI-assisted coding, I feel like I only really need to share this link.

To be "fair" (not that I believe we need to be fair to this absolute turd of a codebase), the main developer of OpenClaw is really taking a much more strict vibe-coding approach (as in, they are clearly not even reviewing the AI-generated code at all) and so I think you have to look at OpenClaw as a kind of "worst case scenario" for AI generated code.

My take-away from these

I think that if you take your hands off of the wheel, you're eventually going to crash.

I really think that's what these projects and others like them demonstrate to us. I don't think this is particularly surprising given that LLMs are essentially glorified unthinking, statistical and pattern matching machines. They are good at recognizing and replicating patterns, but they are not capable of developing a coherent model to understand a problem domain.

I also think that these projects are showing that as project complexity increases, the effectiveness of AI seems to lessen. Probably more research and evidence in this area is needed, however. I fully recognize that I've not made an air-tight case for my views here.

To me, it just seems like these projects are all suffering from a kind of "almost there, but not quite" problem where the end goal is perpetually always juuuust over the horizon and if you just push a little harder you can make it. It's feels a bit like the 80:20 rule where the first 80% of the functionality takes 20% of the time, while the last 20% of functionality takes the remaining 80% of the time. The problem is that I think for large projects which are predominantly being developed with AI-generated code that last 20% can be quite hard, if not impossible, to get through. And attempting to do so is likely going to completely wipe out your alleged productivity boost.

Productivity

This AI hype cycle is all about productivity. You don't have to go far to find developers claiming that their productivity is now 10x what it was due to their use of AI.

On the surface of it, this would seem to be almost believable. If you've seen AI chatbots such as ChatGPT in action, you can see firsthand that they can emit text far faster than any human could type. Then, as a developer, you ask these same AI chatbots some coding questions and you see it produce what appears to be real working code right away at just as fast a pace as it writes plain English responses to you. Wow, this is magical! We've solved coding!

The thing about these self-claims of 10x productivity and such is that it never seems to be accompanied with supporting data to back up the claim. And we certainly don't get to see the code that they produced which allegedly helped them complete their projects 10x faster.

To be clear, I have no doubt that they were able to write some sort of code 10x faster by using AI. Obviously an AI can write code faster. But did that result in the project being completed 10x faster? Was the code correct? Was it bug free or bug ridden? How many times did they have to fiddle with their prompts to the AI because it kept getting things wrong, and did they include that time in their "10x faster" self-estimate? Is the code maintainable (because of course we all know that projects don't end when you ship version 1.0)? How easy or difficult will it be for future developers with or without AI-assistance to keep extending the code?

I've seen these type of productivity claims at my job over the past few months too. We had a company meeting a few months ago where someone was demonstrating some AI coding tool and claimed at one point that it was a "20% to 40% speed up" and did not at all elaborate about how they measured that. Or even if they measured it at all, and that it wasn't just some random number pulled out of their ass. The crazy thing to me about this was that it was presented as a range. "20% to 40%" is a large range. Why such a large range? If you had actually measured it, surely it would be a more precise number, not a range, right? Or at the very least, you'd be able to explain in detail with numbers and examples why it is a range.

Then a couple months later, someone else at my job sent out a company-wide email proudly claiming that a recent experimental project using AI-generated code resulted in a 7x speedup. Again, without one single shred of supporting data to back this claim up at all. Only some vague reference to back of the envelope math (which, unsurprisingly, wasn't shared either).

Why is it that the people making these productivity increase claims never share their actual methods of measuring with complete data to back it up? I honestly do not believe that the vast majority of these people are measuring anything at all. I'm starting to believe that they are "vibe-measuring" if I can call it that.

There's a METR study on the impact of AI on developer productivity that is widely regarded to be a fairly flawed study (a take which I agree with). However, I think one of the most interesting and relevant findings from that study is that the participants in that study who used AI tools self-estimated that they were about 20% faster when in fact they were 19% slower. I think that self-estimate that they were faster before seeing any supporting data is the exact same thing that we see elsewhere in AI-assisted coding discussions today with the self-claimed "10x productivity boost" people.

Measuring developer productivity is notoriously difficult and I've not seen any evidence in my career so far of companies doing anything right in this area. So, the idea that we as an industry are all of a sudden effectively measuring productivity of developers during this AI hype cycle (so that we know we're not just talking out of our asses with self-claims of "10x productivity") is absolutely ludicrous to me.

So what other data is there regarding the effects of AI on software development productivity, and what is it showing us?

First, we see that the time previously spent writing code has been almost entirely replaced with reviewing code. Developers are writing more code with AI assistance, yes, but due to the sheer volume of code now being produced, they need to spend far more time reviewing. And it does appear to be buggier code on average. This particular report shows that the net result is that there is no measurable improvement to the velocity of shipping new software releases or otherwise achieving business outcomes.

Secondly, CircleCI very recently did an analysis of 28 million of their customers CI workflows and again confirmed that developers are writing much more code nowadays (feature branch activity is up significantly), but that this is only translating into shipping releases faster for 5% of teams. They arrive at this conclusion by looking at changes making their way into the main/master branches. The other 95% of teams are either seeing barely any difference in velocity at all or are actually shipping slower now! The median showed a decline by 7%.

AI mandates

Hot on the heels of the topic of productivity and measuring things, we come to "AI mandates" that are sweeping through the industry like wildfire.

Companies like Microsoft and Shopify amongst many others have made AI use mandatory for their employees.

Many of these same companies are tracking their employees AI use and even going so far as to create dashboards and other types of scoring mechanisms or metrics to compare and rate them, tying promotions to AI use, giving negative performance reviews for not being enthusiastic enough about AI, and even letting people go for not using enough AI.

As far as software development goes, this basically feels like we've regressed 30 years. We're back to tracking lines of code as a productivity metric again essentially (in some cases, actually). You hear about these all the time from developers today. Stories about metrics like "time to open a pull request", "number of commits per pull request", "time to approve a pull request", "number of AI suggestions accepted". The list goes on. I mean, I just linked to an article on how difficult it is to measure developer productivity that is based on studies that have looked into this in detail. This isn't new information. But, I guess the "leaders" at all of these tech companies didn't get the memo. Or, they too, have offloaded all of their thinking to AI. Or, I dunno, they're just fucking idiots who are guzzling koolaid by the gallon.

Of course we need to mention Goodhart's law here with all of these ridiculous metrics companies are starting to track. Not that it will change anything, mind you.

The thing that really gets me is that in the past when new technologies, tools, techniques, etc were being hyped up in the industry, you didn't see even remotely this level of insanity with trying to jam it into every single orifice possible. I mean, we saw a bit of this with the cloud hype, and then again with block-chain. But neither of these was remotely at the same level of urgency that surrounds the current AI hype. Each of these hype cycles seems to be getting almost exponentially worse. I'm honestly scared to think what the next tech hype cycle will look like. Hopefully I can retire before then.

I've found that people will generally gravitate on their own to tools that are legitimately useful to them and allow them to do the job easier. You don't have to force a general contractor to use a hammer. Maybe, just maybe, the reason there is some reluctance to use these tools is because there are very legitimate concerns with some of them. Gosh. What a concept!

I think the fact that these company "leaders" are forcing AI use to this extreme and urgency level tells you all you need to know about what exactly is underpinning this hype cycle. It's not productivity. It's FOMO.

And lets be honest, it's also a form of "monkey see, monkey do." I mean, If you're a vision-less pointy-haired boss at a tech company and you're spending all your day reading about other tech companies forcing their staff to use AI tools while their CEO's are gloating about how productive AI is making them (while also conveniently offering no evidence to back up these claims), then you're likely foolish enough to believe that's the special sauce, and you should do the same.

You're going to be left behind

For the last two years we've been hearing this bit of fear-mongering amongst developers that if you don't jump on AI-assisted coding that you're going to be left behind. This is another one that makes zero sense to me and I think is really just another example of "monkey see, monkey do."

One of the smartest decisions (from a "keeping my sanity" and "helping to prevent burnout" perspective) that I think I made in my career over the past decade and a bit was to not jump on the bandwagon of JavaScript development and all the libraries and frameworks that seemed to be re-invented every 6 months. I certainly kept an ear open to what was generally happening in that space, but I didn't spend any time learning or writing JavaScript apps (I will acknowledge that I may have been a bit lucky to have been working with ClojureScript for much of this time for front-end web work ... ClojureScript had (has?) a much more sane ecosystem as compared to JavaScript). I don't ever recall hearing someone tell me that I was going to be left behind during that time, even though it seemed to be all the rage for a long while.

Now in 2026, JavaScript is still very much "all the rage." But I now get to step in and benefit from a lot of churn in the tooling and libraries having somewhat settled down. I can learn the current stuff and get up to speed fairly quickly. If I devote time to it, it's not going to take me a decade to catch up to those people who spent the entire past decade entrenched in the JavaScript world. It might take me a few weeks to a couple months to get somewhat reasonably productive (depending on what your definition of "reasonably productive" is) with a JavaScript framework or two, and then I can keep going from there.

If you have any significant amount of experience as a software developer you should know this. Because you very likely have already had to pivot to a new tech stack in the past, and so you know the drill.

The same thing is true of AI tools. It's not going to take anyone two or three years to catch up from zero, even assuming the worst case scenario that they were living underground in a cave and weren't paying any attention to this space at all.

Like, if you think logically about this for just a few seconds, I think most people would realize this. It literally cannot take anyone new that much time to ramp up on these technologies. Otherwise we are fucked as an industry. How could anyone fresh out of college have ever got into a tech job in the past if this were true? This idea that it would take "too long" to ramp up on a skill has never been true in this industry. Yes, there are many technologies that you cannot learn overnight and immediately go from zero to hero. But the vast majority of technologies we use in this industry won't take you years to become reasonably effective at either. AI tools are absolutely no different.

If I look at the past two to three years of AI tooling, it is changing very rapidly. Even the current methods of agentic coding really only started to become wildly popular over the past 6-8 months or so. There is a lot of previous techniques and tools that have fallen by the wayside since ChatGPT first made its big splash at the end of 2022. If you had been following along in earnest the entire way, there would have been a lot of changes that you would have needed to make to not be "falling behind" if you really believed the people saying that.

I'm quite happy to keep an ear to the ground and see what happens over the course of the next couple years, looking for indications of the dust settling somewhat regarding this initial round of tools and techniques. That doesn't mean I don't play around with it myself at all during that same time (I do), but I see no reason to contribute to personal burnout from trying to keep up to date constantly on all of these changes.

Honestly, I'm of the very firm belief at this point that when you hear someone say "you're going to be left behind" that you can immediately disregard anything further they have to say. They are not an intelligent person capable of critical thinking. They literally just demonstrated that to you by repeating that line.

I would even turn the tables around and say that the people who I think are really going to be left behind are those who are offloading so much of their thinking to AI tools with their skills having atrophied so heavily. My prediction is that in a few years time it will become very obvious who these people are, and I don't think any of us will want to be in that group.

Junior developers

Perhaps junior developers are the ones who may truly be left behind, now that I think about it. But not for the reasons that the pro-AI fear-mongers think.

I'm constantly reading anecdotes from developers working at various companies complaining that their junior developers are not learning the fundamentals anymore. They come out of school and don't learn things because they can just ask an AI and copy/paste the answer. Or not even copy/paste, they just use some agentic coding tools and it writes the code for them directly into the project. What do they really learn from this experience?

What does this mean for the industry going forward? Who is replacing the current generation of senior developers? What does their actual skill set look like?

I don't have any answers here. All that I can say is that this one is legitimately worrying to me. Education and "skilling up" has become really fucked up over the past few years, and it is very concerning.

Some more thoughts on OpenClaw

I very briefly touched on OpenClaw (formerly Clawdbot and then Moltbot) previously. This is an autonomous AI assistant that can execute tasks for you in response to you giving it simple plain English instructions, and it will communicate to you via instant messaging. So, you can ask it to help manage your emails, write blog posts, create pull requests on Github, etc etc.

This is understandably a really compelling tool for a lot of people. This type of tool might even be the "killer app" of this current AI hype cycle.

The problem is that it is, with no exaggeration, a massive security breach waiting to happen. The software is completely vibe-coded. It is full of low-quality code with tons of security vulnerabilities.

Many people were rushing out to buy Mac Minis to run their own self-hosted OpenClaw instance and then failing to lock it down at all leaving it completely exposed on the public internet. Which is of course deeply concerning, because in order get OpenClaw to be able to do things for you such as manage your email or write blog posts for you, you have to give OpenClaw access to these things. So if some attacker breaks into your OpenClaw instance they can easily get access to the same accounts that you gave OpenClaw access to.

I really love how the creator of OpenClaw, Peter Steinberger, responded to the security researchers in the above linked article when they shared their security vulnerability findings with him:

This is a tech preview. A hobby. If you wanna help, send a PR. Once it’s production ready or commercial, happy to look into vulnerabilities.

This is completely wild to me. You would have to be very ignorant on the security implications of this or just plain crazy to read this and then still decide "yes, I want to continue running OpenClaw."

But the worst part is that even if you lock OpenClaw down behind a firewall, and secure the filesystem and running permissions to protect your account credentials, etc etc, you are still not protected. The problem is that it is not possible to 100% securely host OpenClaw assuming that you want to give it access to stuff in order to, you know, do stuff for you.

Put another way, the very capabilities that make OpenClaw compelling is what makes it inherently insecure.

Here I introduce you to the lethal trifecta. This trifecta is composed of:

- Access to your private data. (your emails, your Github account, your blog/website, etc)

- Exposure to untrusted content. (incoming emails, comments on your blog, issues or pull requests on your Github repositories, etc)

- The ability to externally communicate. (compose emails, write posts on your blog, response to issues or pull requests on Github)

The above three parts of the trifecta describe OpenClaw to a T. And the astute observer will recognize that this is not limited to just OpenClaw. Any other LLM-using software solution that is hooked up to these three things is vulnerable to the same issue. So all these other OpenClaw-like assistants that we keep seeing pop up are not any better off.

The problem with LLM prompt injection (or jailbreaking I guess) is that it is incredibly difficult (if not impossible) to defend against if there is the possibility of untrusted user input making its way into an LLM prompt. As explained in the previously linked article about the lethal trifecta, at a low level the input to an LLM is a single stream of tokens. It's not able to distinguish between tokens from your system prompt versus tokens that maybe originated from untrusted user input. A token is a token to the LLM. There is no difference.

That means you're forced to try to protect by sanitizing your untrusted user inputs before they reach your LLM. And if you're talking about emails for example, you have to sanitize plain language. It's not like you're sanitizing data from a CSV file, or something like that. This is actually quite a hard problem to solve! How many different ways do you think there are to write a prompt jailbreak in English? How confident are you that you can write code to catch all of them, including the ones you can't currently envision?

And then of course we hit the other big problem: LLMs are not deterministic. Not even if you set temperature = 0. Here's another article with a good explanation about this.

So, with this in mind, even if you spend many hours tweaking the various Markdown files in your brand new OpenClaw installation to help protect it from prompt jailbreaking, what guarantees do you have that this will work 100% of the time? An attacker effectively has unlimited attempts, so 99.9% effective isn't good enough.

A sad example of OpenClaw FOMO

I share this example because this to me really embodies what I think is the core of the problem with the current AI hype cycle, and its really why I wanted to talk about OpenClaw at all in this post.

Shortly after the initial OpenClaw hype, people at the company I work at began experimenting with it including people in senior leadership roles. They were even hooking it up to actual work systems, including our Zulip chat.

The idea eventually came about that our company would be selling hosting and consulting services for OpenClaw with a preliminary advertisement page on the company website that was making grandiose and, frankly, impossible claims about security.

I spoke up eventually about this, sharing all of the general information above here in this post. And I received the most unexpected pushback that I think I've ever seen in my career from people in senior leadership roles who claim to care a great deal about security. They were dead-set on continuing with their efforts, and insisting that they would be able to find ways to "responsibly" host OpenClaw and that they would be continuing to tweak their various Markdown files to find ways to harden it up.

Absolute insanity.

This is when you know that people are chasing hype. Plain and simple. There is no other explanation. They are chasing dollar signs. They believe that this is the big moment that they can cash in on.

I've since been told that maybe they are starting to come around a bit on this and "see the light" so to speak. I don't know that I believe this yet, since the current plan as I understand it is still to continue offering hosting services of OpenClaw in some form, which to me would indicate that they don't understand the risks. Because if you did you would understand that you should not host it at all.

I'm not aware of anyone else at my company who spoke up openly about this. I did hear in some private chats from a couple people who agreed with my concerns, but that they seemed to be continuing on as directed by senior leadership because it was asked of them. When it comes down to it, I can't really blame them.

And this to me is the other big problem. People are scared to voice their concerns about AI tools. They don't want to be seen as the luddite. Especially not with how terrible the job market is right now. So bad ideas get free reign, as long as it is about AI. It is truly worrying.

I do actually use AI

If anyone actually has bothered to read this far, you might have come to the belief that I don't use these tools at all. I must be a luddite.

Actually I do use some AI. I run my own llama.cpp server at home on hardware I bought specifically for this purpose. It's not the fastest hardware by any means (A Dell Precision 5820 tower with two AMD Radeon Pro W6800 cards), but it is still extremely usable at good speeds. I've been running this setup for about a year and a half (though, I originally started with Ollama).

The models that I am currently playing around with are (in no particular order):

I prefer sticking to the open-source / open-weights LLM model ecosystem instead of the big cloud providers like OpenAI, Anthropic, Google, etc. I do very firmly believe that a big rug-pull is coming SoonTM with respect to pricing because the current financials for these companies just don't make any sense at all. There are already anecdotes circling about companies starting to tighten the purse strings with respect to what is being spent on developer AI account subscriptions. For example, a colleague of mine was telling me about how the company he works for just recently announced that they were not going to pay for Cursor's Max Mode anymore, and how developers in the company Slack chat were reacting not unlike drug addicts being told that they were about to be cut off. Fascinating stuff honestly. Keep your popcorn at the ready.

While the models from OpenAI, Anthropic and Google are clearly still superior, I don't think the open-weights models are all that far behind either. I truly believe that the future of LLMs is going to be self-hosted, not cloud-hosted. Any hardware and software improvements that make cloud-hosted better and more efficient also trickle down to self-hosted. There really isn't a moat here.

I will also acknowledge that I do have strong ethical concerns about how the training data for all of these LLM models has been obtained. This includes all of these companies, including the people behind the open-weights models. At my day job I have to help defend our client servers against an onslaught of bots scraping their content with such intensity that these bots effectively DDoS the server. And the data is crystal-clear when I look at it, these bots are predominantly coming from countries on the other side of the world, and very often from networks like Alibaba Cloud, etc. There's copyright issues all over the training of these AI models too, with companies like Meta being literally caught red-handed admitting to downloading copyrighted works via BitTorrent for the purposes of training their AI models. Yet they barely even get a slap on the wrist. I don't really feel very good about the use of this technology, if I'm being honest.

I think when it comes down to it, the main reason I use it all given the types of things I've written about above in this post, is more to keep tabs on things rather than any "true belief" in the technology. I can see the uses, but yes, I definitely believe it is massively overhyped and is being shoehorned into places that it should not be (e.g., using it in processes that require 100% deterministic output).

However, I do not do agentic development. I definitely do not do any vibe-coding, 'nor spec-driven-development. Those last two are bullshit through and through, by the way.

I keep my use of AI to very small, very focused tasks only. I don't ever let it write code for me. I'll ask it about stuff sometimes such as examples for libraries I am using, things of that sort. But again, I don't ever let it write code for me, 'nor do I copy/paste stuff from an LLM into my codebase. I don't trust it. I do not think that taking your hands off the wheel during software development is a smart decision, 'nor that it leads to consistently good outcomes. Eventually I believe the data will back me up here, but time will tell. It's hard to have rational conversations about this with all of the hype.

I'm tired

Most of all I'm just tired of this hype cycle. I want it to end. I know that I'm not alone in this. It often feels like those of us who share this view are being drowned out by the hype, so its easy to get depressed about this and feel like we're not being heard. I am encouraged that I do see more and more people speaking up about the problems with everything going on with AI in this industry. It is starting to feel like there is a glimmer of hope on the horizon, that some semblance of sanity might yet return.

I wrote this post largely out of anger, frustration and depression. I don't really know how much better it has made me feel now that I've finished writing it. Maybe a little bit, perhaps.